On Slop

How can you tell when the Words came out of the Bag, rather than from a human brain?

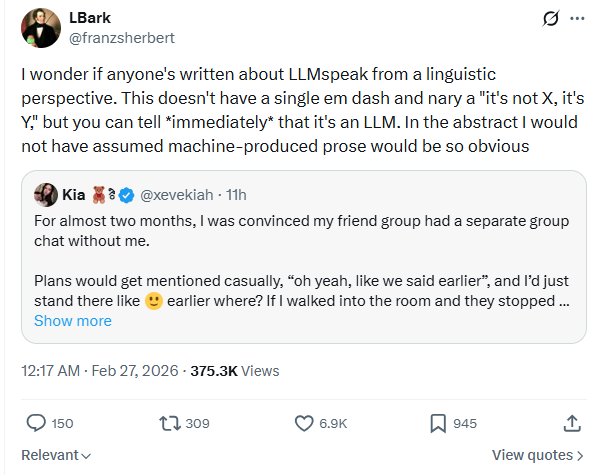

Over on ex-Twitter, I was struck by this QT comment labeling something as the work of an LLM (screenshot because petulant billionaires):

I’ll copy the full text of the tweet they’re quoting, because it’s helpful to see the whole thing:

For almost two months, I was convinced my friend group had a separate group chat without me.

Plans would get mentioned casually, “oh yeah, like we said earlier”, and I’d just stand there like :-) earlier where? If I walked into the room and they stopped laughing, my brain immediately titled it: Season 2: The Quiet Exile. I started analyzing delivery times, inside jokes, who viewed my stories but didn’t reply. Every late response felt intentional. Every “we forgot to tell you” felt strategic.

One night I finally joked, “So what’s the name of the secret group chat?”

They all looked confused.

Turns out there was another group chat, but it was for planning a surprise birthday thing for me. The reason they’d go quiet? They were terrible at lying. The “earlier” conversations? About work, not me. The late replies? Two of them had just started new jobs and one was going through a breakup.

Meanwhile, I had already mentally written a betrayal arc, drafted my villain origin speech, and emotionally distanced myself for protection.

The stupidest part? Nobody was excluding me.

I was just overprotecting myself from a threat that didn’t exist.

That’s when I realized, sometimes the only person putting me on the outside… is me.

This was striking to me, because it doesn’t read as unmistakably AI slop to me. I mean, it’s absolutely slop, but I could readily believe it was produced by a human rather than an LLM.

From looking at the replies, I think this is a function of platform-based expectations. A lot of the “tells” people are pointing to basically amount to it seeming a little too polished, which I think is right… for ex-Twitter. It feels like something that’s been through several rounds of (self-)edits, which is not what you expect to see on a microblogging platform. Ex-Twitter is, for most people, a space for quickly dashed-off thoughts, and as a result you tend to see a lot more rough edges— typos, little grammatical glitches, items that seem like hooks for a future callback that never comes, awkward asides, etc. Whatever this is, it’s not rough in that “I’m typing this on my phone and hitting ‘send’ as soon as I finish” way that you expect from ex-Twitter (or Bluesky or whatever).

On the other hand, I see a lot of this on other platforms. Chiefly Facebook (which I read a lot because I am Of A Certain Age), but occasionally LinkedIn, and some old-school blogs and email lists. I absolutely recognize the style, from the way I reflexively reach for “Page down” about two sentences in.

A lot of the replies to the linked QT specifically mention LinkedIn, where this kind of overproduced sludge runs rampant. It’s the kind of thing you get when people who aren’t confident in their natural writing voice try to produce something personal for a “professional” platform. It’s overly polished in a way that removes major grammatical missteps but also removes any genuine charm. It reads like it’s been through several rounds of laborious edits, but not with an actual professional editor who would know when to correct errors and when to lean into them1.

Importantly, this level of slop has existed on Facebook and LinkedIn for years— I’m been skipping lightly past posts like this for far longer than LLMs have been readily accessible. Which is, of course, why this is what you would expect to get from an LLM— these models have been trained on terabytes of this kind of quasi-motivational, pseudo-therapeutic pablum, which is all over the public Internet.

The platform mismatch is probably the strongest indicator that this actually is LLM output. This kind of post becoming a lot more common on ex-Twitter of late as the introduction of long posts and monetization have made it possible to earn money by tweeting sludge. That’s a low-margin business, so people attempting it are strongly incentivized to outsource its production to some sort of LLM, which can dip into a roiling cauldron of linear algebra and ladle out enormous quantities of this literary bone broth. It’s by no means absolute proof of a digital origin, though, just highly suggestive.

I’m thinking about this a bit at the moment, because the students in my class this term are (supposed to be) at work on research papers relating to quantum optics and quantum information. I’m going to need to turn their first drafts around very quickly, so I’m already bracing myself for having to mark up a ton of student writing next weekend. And while I am not overly worried about this particular group of students— they’re junior and senior physics majors, most of whom I’ve had in class before— there is a tiny nagging voice in the back of my head whispering “Yeah, but what if they do? How would you even tell?”

This problem is particularly acute in the STEM fields, I suspect, because the goal of most technical writing is the kind of correct but charmless prose you get out of LLMs2. That’s not really the aspirational ideal of technical writing (a phrase I typed in the first pass at the previous sentence but replaced), because the very best scientists do manage to write in a way that is both technically correct and genuinely charming. That’s very hard to do well, though, and when it goes badly it goes very badly. So we’re mostly taught (and mostly teach) that if you’re likely to err, it’s way more important to be correct than charming.

The tell for LLM-generated writing, I think, would be something that reflects an implausibly good understanding of the subject matter, that goes deeper than what I presented in class or assigned as reading. Honestly, though, I don’t think there’s a great chance of that coming out of an LLM3— on the topic of quantum optics and quantum information, those remain much more likely to produce something that’s slick but kind of superficial, tending toward sentences that deploy technical terms in ways that are grammatically correct but semantically empty. But that’s hard to distinguish from the genuine work of students who are good writers but out of their depth on the technical side4.

Which means I’m mostly going to be looking for rough edges as indicators of genuine student work— words that don’t quite mean what they’re using them to mean, little deficiencies of organization or topic coverage, etc. Which is pretty thin. And, of course, I require them to do in-class presentations between the rough draft and final paper, which is a really good backstop in terms of figuring out what they actually understand about their topic.

As I said, I’m not particularly worried about this specific class, because I know most of these students reasonably well. The general issue looms over all of academia, though, so like everyone else employed in higher ed, it’s something I have to think about even if I ultimately dismiss it as a direct concern.

That’s this week in Fretting About the Bag of Words. If you want more of this, here’s a button:

And if you’d like to suggest some foolproof means of spotting LLM output, the comments will be open to anyone able to identify which of several photos include traffic lights:

To paraphrase an editor friend, the point of learning the rules of writing is to be able to know the right time to break them. Really good writing almost always includes some elements that are not strictly correct, but that work nonetheless, and there’s real skill in both producing those and recognizing it in someone else’s writing.

A problem that’s exacerbated by the fact that single-author publications are exceedingly rare in STEM, which means that research articles inevitably have a bit of a “written by a committee” feel to them.

I might get it from a couple of students, actually— I’ve got some math (double) majors in the class who are definitely better at, say, the number theory involved in a lot of quantum algorithms than I am.

Many, many years ago, the first time I taught quantum optics, I met with a student to go over their rough draft and started the conversation by saying “You’re a really good writer. Good enough, in fact, that I almost can’t tell that you have no idea what you’re talking about.” He immediately copped to it, and we went through the stuff he hadn’t been able to figure out, and his final paper was worlds better.

(That particular student went to law school right after graduation, though he switched to a Physics Ph.D. program a year or so later…)

I see what you mean, but I think facility has also increased quantity. Half of the people writing on Substack would have been writing much less, if at all, if AI didn't make their process faster and easier.

Bad writing is bad writing, and I'm not sure it matters whether this sort of thing is produced by people or by ai. Obviously it's a different issue when trying to evaluate students, or build human knowledge with some assurance that it's not hallucinated, but for this type of anecdotal self help BS, it doesn't make it any more or less meaningful, so I'm not sure why people react angrily to it.