Over the last ten years or so I’ve become much more a guy who writes books than a research physicist, but when people in science-y contexts ask what I do, I still identify myself as a physicist first. When they ask “What kind of physics?” I say that my background is in Atomic, Molecular, and Optical (AMO) physics, and more specifically that I’m a cold-atom guy. My Ph.D. thesis research was on collisions between xenon atoms at ultra-cold temperatures, my post-doc was on quantum effects in Bose-Einstein Condensate (BEC), and I got my day job as a professor by proposing to do collision studies, then got an NSF grant to use laser cooling techniques to study radioactive backgrounds in astrophysical detectors. I wrote about the pre-faculty parts of this in some detail at my old blog back in the day.

This can all be traced to a fairly specific event back in the winter of 1991-92 (I think it was probably December, but it was thirty years ago, so I’m a little hazy on the exact date), when I went to a physics department seminar talk. This was not incredibly unusual behavior for me at the time, as I was a junior at Williams majoring in physics, but most of those talks were highly forgettable (and, indeed, are long forgotten). This particular talk was pitched as something of a big deal (I think it was funded by some external lecturship program), and featured a French professor I had never heard of, Claude Cohen-Tannoudji.

I didn’t go into this expecting much— I think I went mostly because they promised dinner out at a restaurant afterwards— but the talk absolutely blew me away. Cohen-Tannoudji is an excellent speaker, and always makes even very complicated topics seem completely clear and obvious (later on, I often struggle to reproduce what he talked about, but while he’s speaking it all makes perfect sense). His talk was about the subfield of laser cooling, specifically the Sisyphus cooling mechanism that had only been discovered a few years previously, and was still an active topic of interest.

This really caught my interest for a number of reasons. First of all, the whole concept of laser cooling is brilliantly counter-intuitive— you shine lasers on a gas of atoms, and as a result they get super cold, to within millionths of a degree above absolute zero. It’s also really simple and elegant on a conceptual level: light is a stream of photons, those photons carry momentum, and that means that when atoms absorb or emit photons, they get a little “kick” in the direction the light was moving. Clever physicists can exploit these kicks to slow the motion of the atoms, and a gas of slow-moving atoms is a gas with a low temperature; thus, laser cooling.

Laser cooling is also the rare example of a field where real-world physics gives better results than a simplified model would lead you to expect. If you do the usual physicist-y “spherical cow” trick of abstracting away most of the internal structure of the atoms and just treat them as systems with only two energy states, you can predict a lower limit for the temperature you can expect to reach with laser cooling, which is somewhere around a hundred microkelvin (that is, a hundred one-millionths of a degree above absolute zero). In actual experiments, though, it’s relatively easy to reach temperatures well below that limit.

This turns out to be a direct result of the more complicated internal structure of real atoms. When you put more of the details back in, you find that a combination of subtle factors that nobody thought would matter create a situation where the atoms are constantly subjected to a force slowing their motion. In a particular cartoon picture of it, an atom exposed to the right laser field finds itself climbing up a “hill” and losing energy, but just as it reaches the top of the hill, an interaction with the light will suddenly drop it back to the bottom without recovering any of the lost energy. Then it has to climb again. Thus, the nifty Classical allusion of calling this “Sisyphus cooling” (which let me sneak in a citation of The Odyssey when I wrote about it in my thesis).

Cohen-Tannoudji was the head of the group that figured out the details of the Sisyphus cooling process in the late 1980s, and was at the forefront of research into how to push it farther when he gave that talk at Williams. I found the whole thing absolutely fascinating, and said something to that effect to one of my professors, who replied “Well, you know, we’re starting a laser cooling experiment here in the department.” And I was hooked.

I signed up right away to join that project, and did my undergrad honors thesis trying (unsuccessfully) to get a laser-cooling experiment off the ground. I applied to grad schools in the winter of 92-93, and got accepted to the Chemical Physics program at Maryland. As a bonus I was offered a summer job in the lab of Bill Phillips at the National Institute of Standards and Technology (NIST), where I stayed until I graduated in 1999.

This was a great time to get into the laser-cooling business, which was then just out of its infancy— in the kid analogy, it was at the stage of toddler-dom where it wandered around the house erratically sticking random things into its mouth. Pretty much anything you could think of to do with laser-cooled atoms was a historic first, and people tried to poke into all sorts of different areas of atomic physics.

Some of these were pretty obvious— the original motivation was to slow atoms down to make precision spectroscopic measurements easier, which is why a lot of the initial work was done at NIST and other standards labs. The current state-of-the-art in cesium atomic clocks starts with laser-cooled atoms, and the next-generation systems being researched today use either laser-cooled ions or “optical lattices” created by the Sisyphus cooling physics.

Another obvious (to a physicist) application of this is the study of matter waves. Quantum mechanics tells us that material objects have wave character with a wavelength that gets longer as the momentum gets smaller. Cold gases of atoms thus feature longer wavelengths and more obvious quantum effects, so laser cooling was quickly put to work exploring the interference and diffraction of slow-moving atoms. The extreme version of this is the phenomenon of BEC, where the wavelength of the atoms becomes comparable to the spacing between atoms in the gas, at which point a large fraction of the sample can “condense” into a single quantum wavefunction (if you have the right sort of atoms). This was predicted in 1925 by Satyendra Nath Bose and Albert Einstein, and first achieved in a sample of rubidium atoms in 1995 by Eric Cornell and Carl Wieman at JILA. The physics that leads to BEC is closely related to the physics of superconductivity, so this has attracted a significant amount of interested from physicists in condensed matter (mostly theorists, because laser-cooled analogues are way cleaner than real material systems).

And going in the other direction in terms of numbers, laser cooling gives you the ability to control all the properties of individual atoms— their motion and their internal states— to a really impressive degree. This allows laser-cooled ions and atoms to be used as “qubits” in quantum-information experiments— and even products from companies like IonQ (using trapped ions) and QuEra (neutral atoms).

Those are the biggest sub-sub-fields of cold-atom physics, but there are a bunch of other applications as well, in plasma physics and molecular physics and physical chemistry and even as a tool for things like radioactive dating of ice cores. If you’re a little more generous about where you draw the boundaries and pull in the general category of “using light forces to push things around,” there’s a whole slew of work in biophysics and nanotechnology drawing on “optical tweezers” to manipulate microscopic objects with focused laser beams.

Nearly all of this growth has occurred since I got into the field back in 1992 (please note that I am not in the least suggesting a causal relationship there…). The first successful BEC experiments were in 1995, and that year also saw the publication of the Cirac-Zoller paper on using trapped ions to do quantum computing, which really kicked that part of the field into gear. Along the way, the physicists who built the field have received significant international reaction. In 1997 (when I was a grad student at NIST) Claude Cohen-Tannoudji, Bill Phillips, and future US Secretary of Energy Steven Chu shared the Nobel Prize in Physics for the development of laser cooling techniques in the 1980s. Four years later (my first year as a professor), Eric Cornell, Carl Wieman, and Wolfgang Ketterle shared the 2001 Nobel for the achievement of BEC. Other Nobel prizes were shared by Theodor Hänsch (2005) and David Wineland (2012), two of the four physicists who first proposed laser cooling1. And if you’re doing the “generous boundaries” thing, Arthur Ashkin won a share of the 2018 prize for the development of optical tweezers.

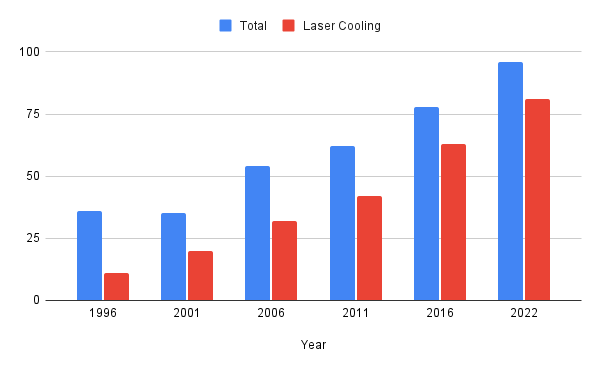

You can also see the growth of the field in the things that atomic physicists talk about. If you look at old programs from the annual meetings of the American Physical Society’s Division of Atomic, Molecular, and Optical Physics (DAMOP), the growth of laser cooling is striking. Back in 1996 (the oldest program they have online) the meeting was pretty small, and laser cooling was a smallish though not insignificant part of it— 11 of the 36 sessions of talks included at least one abstract mentioning cold atoms. This fraction has steadily increased from under a third in 1996 to better than 80% of sessions involving laser cooling in some way, and the meeting has grown enormously— the 2022 meeting featured 96 sessions of talks, 81 of which mentioned laser cooling. (That may even be an underestimate, as it’s just too many abstracts to go through them all…) I’ve regularly made statements of the form “Laser cooling absolutely revolutionized atomic physics,” but poking through these recently makes the cold-atom takeover starkly obvious in a relatively quantitative way.

I’ve been thinking about this (and made that graph) because something about the history and physics of laser cooling is a top contender for my Next Book Project. We’re past the thirty-year anniversary of my getting into this subject, and I still find it just as amazing as I did back when I first heard Cohen-Tannoudji talk about it. I’m mildly surprised that there isn’t a general-audience book yet that goes into this (something I’ve lamented multiple times in recent years when I wanted a reference to point to for a different project). The trick now is figuring out the right way to frame the science and the story to make it a workable proposal…

There’s a bit of self-motivation here— I’ve been a little cagey about what I’m working on just on principle, but stating it a bit more openly will force me to buckle down more and actually work on it (this is one of two steps in that direction I’ve taken this week). I’m probably not going to preview everything here, but bits and pieces are likely to show up; if you’d like that in your inbox, here’s a button to click:

If you’re also older than dirt and want to reminisce about physics history from the late 20th century, the comments will be open:

The other two, Art Schawlow and Hans Dehmelt, also won Nobels (in ‘81 and ‘89, respectively), but for work done before the field took off.

I may have told you this story before, but I also got into atomic physics because I attended a lecture. I fact, I attended three lectures, all within a few days, in spring 2000. The first lecture was by Carl Wieman, and I had to skip my regular physics class to attend it because he was speaking to a class for physics majors (he was very keen on physics education even then) and I wasn't yet a physics major. The second was Carl's department colloquium that afternoon, and I similarly had to skip my regular physics lab to attend it. The third was a joint chemistry-physics lecture by Wolfgang Ketterle held in the evening a day or two later, and naturally I had to skip my regular physics recitation to attend *that*.

All three lectures were on BECs, and during Carl's colloquium, I asked what I now know to be a very good question, but was at the time just a naive one: "Why rubidium atoms?" Carl’s answer was essentially that they're big, fluffy and easy to laser cool, and they don't exhibit some of the interactions that make caesium (another early BEC candidate) so hard to Bose condense.

I'm not sure I fully understood this answer at the time, but the fact that I asked the question meant that during the reception after Ketterle's lecture, Carl, who was still around, recognized me and said hello. In the ensuing conversation, he discovered I was only a freshman, and immediately told me to apply for the NSF REU program at Colorado as soon as I was eligible.

This is how I ended up spending a very fine summer in Carl's lab shortly before he won the Nobel Prize (definitely no correlation), and how I went on to do a PhD on making BECs from a mixture of rubidium and caesium atoms. (I didn’t succeed, for reasons that include caesium being awkward, but other people managed it eventually.)

I didn't skip many physics classes, labs or recitations, but I sure am glad I skipped those three.

Great book topic. I have not read a science "news" book in a while