In the comments to last week’s kind of meta post about the argument about whether particle accelerators justify their cost, Tom Metcalf brought up a point that struck me as interesting:

Setting aside space exploration, whenever this comes up I wonder if there are equivalently expensive big science instruments that could have been built, and could have answered specific questions, but weren't. I can think of other areas where instrumentation has steadily improved (scanning whatever microscopy) but it doesn't seem like a gigantic multi-billion dollar version would move the field forward.

This is an angle on the question of “Big Science” that I hadn’t considered before, and seems worth poking at a little bit. That is, the history of high-energy physics has been a long story of escalating cost and consolidation: the first particle experiments were very small and used cosmic rays, then van de Graaf and cyclotron and synchrotron accelerators were developed at lots of places, but gradually the experiments got bigger and more expensive and were whittled down to two (Fermilab and CERN), and now just one (the LHC). Any “next generation” machine will almost certainly be a singleton, because the price tag will run to tens of billions of dollars.

It’s not clear, though, whether this sort of trajectory from small science to Big Science to singular science is inevitable. That is, we can reasonably ask whether we should expect other branches of science to follow a similar trajectory, where experiments start out relatively cheap, and then get both more expensive and less numerous until you’re really only left with one massive experiment at the scientific frontier because it’s simply too expensive to duplicate it.

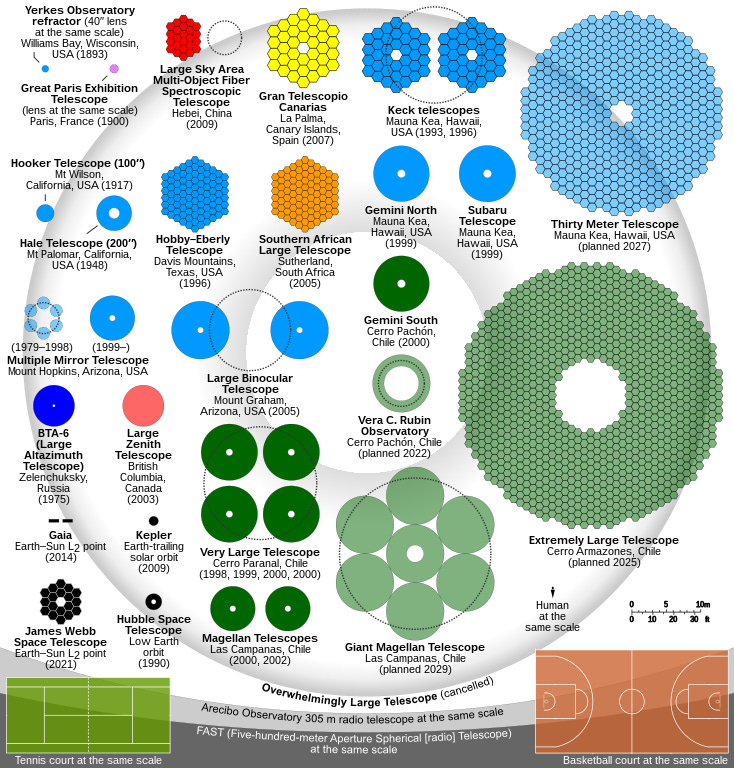

The other obvious candidate for a science on this general path is astronomy1. This infographic on telescopes has come around again recently, in the context of the Webb space telescope finally being deployed, and at some level seems to tell the same sort of story of instruments getting bigger and more expensive:

Except, I’m not sure it really does. The top-of-the-line Earth-based telescopes are roughly comparable in scale and thus resolution, meaning that each of them has an approximate peer. The same is true of the telescopes that are still in the planning stages: these are a cluster of roughly comparable instruments that are theoretically going to come online in the course of a few years.

Another candidate, closer to my scientific “home” might be the steady expansion of precision measurement experiments looking for “new physics” in the nth decimal place of measurements of atomic properties, leveraging the phenomenal precision of modern atomic clocks to reach a scale where the tiny effects of exotic particles might become visible. A big selling point of this branch of AMO physics has long been that the scale of the experiments is such that you can have a lot of them in parallel at different places; indeed, some of the pivotal experiments have even been at very small institutions, like Larry Hunter’s long-running program at (sigh) Amherst College. More recently, though, there’s started to be some consolidation in this area, with the growth of multi-investigator projects like the ACME collaboration and the Center for Fundamental Physics at Northwestern. It’s still a loooong way from the scale of particle physics, but it’s been a joke in the field for a few years now that they’re on that track.

It’s worth asking what similarities and differences exist between these. All three are fields where doing better requires going up in scale and complexity— to higher energy; to larger mirrors for greater resolution and light-collection; to even more sensitive detection technologies. These improvements necessarily come at a price, and that’s been the biggest driver of the increased cost in particle physics: searching for new particles with larger masses (or just making more of known particles to allow better measurements of their properties) requires bigger tunnels, stronger magnets, physically larger detectors. The same physical size issue clearly applies in astronomy, with the need to add more and higher-quality mirrors, and you can make a bit of a case for something similar in the precision-measurement area, with the need for better lasers, better environmental controls, and the like.

At the same time, astronomy and precision-measurement share a feature that doesn’t apply in the particle case, namely that they’re both fundamentally observational sciences. They’re not creating exotic particles that require high energies to make, they’re looking at stuff that already exists out in the world. In the case of astronomy, that very directly motivates the creation of at least two comparable instruments, because you want one that can see the Northern Hemisphere sky and one that can see the Southern Hemisphere sky. There are objects in the north that can’t be seen by telescopes in the south, so you can at least double your target space without needing to massive upgrade the telescope technology itself. Indeed, there’s still a lot of work done by telescopes that are well behind the leading edge of the technology, simply because there are so many things out there that have never been studied at all.

For precision measurements, you have some opportunity to improve your sensitivity without needing to upgrade expensive facilities, simply by changing target systems. You can see a bit of this in the career of Dave DeMille (formerly of Yale, now at Chicago). When I worked down the hall from him, he was working on an experiment to search for exotic physics using lead oxide molecules; when that turned out to have unfavorable properties, he switched to thorium monoxide (for the ACME collaboration), and now he’s doing slightly different things with strontium fluoride and thallium fluoride. The shift from one molecule to another with more favorable properties allows a jump in sensitivity without needing a massively expensive upgrade in the performance of the lasers and other costly equipment. This diversity of possible targets with slightly different properties is a factor that acts to slow the increase of costs and the consolidation of research groups.

As you move away from fields that are in some sense “really” looking at fundamental physics, it’s even less clear that there’s any reason to expect consolidation and increasing prices. There are a lot of condensed-matter and life-science research programs that draw on a smallish number of large and expensive user facilities— synchrotron sources of far-UV or X-ray light, for example— but these don’t really have the same absolute need to push to larger machines at higher energies. Improvements in flux, frequency resolution, etc, sure, but the nature of the objects of study in those fields limits the energy you would even want to employ. And as with astronomy and precision AMO these are fundamentally observational sciences, and the incredible diversity of species that could be observed means that even a machine that isn’t at the absolute frontier is still useful. If anything, there’s even some hope for a reversal of the trajectory, at least if you listen to people in the high-harmonic-generation community, who are working toward tabletop sources of light that currently requires a massive machine.

So, in a lot of respects, I think the position of experimental particle physics may be uniquely bad in this sense. That is, they have no real path forward that doesn’t involve constructing a singular “next generation” machine with an eye-popping price tag. It’s not at all clear that other fields of science would necessarily follow the same sort of trajectory, where everything ends up converging on a single massive project.

But, then again, I am very much Not A Life Scientist, so it may be that there’s some similar trend in play in the biomedical space that’s obvious to people who do work in those fields, but hidden from an outsider like me. In which case, I’d be interested to hear about it, so drop a line in the comments…

If you enjoy this kind of discussion, and would like to get it emailed to you directly, here’s a shiny button to click:

If you want to dispute my characterization of what’s going on in any of these fields, or better yet, enthusiastically agree with me, the comments will be open:

I will note that I’m skipping past the example that Tom mentioned in the next paragraph of his comment, namely gravitational wave observatories, mostly because there was never a time when that was cheap enough to be widely distributed. That’s been a single massive project for decades, now. I do agree with him, though, that I’d probably prefer to fund new and better versions of LIGO than a next-generation LHC.

I’m no life sciences person either, but it seems to me that their equivalent is the need to replicate studies at very large scale to truly be sure of ANYTHING they are seeing. The literal cost may not be in the same realm as colliders but it seems like there is a logistical equivalent cost.

"In the case of astronomy, [being an observational science] very directly motivates the creation of at least two comparable instruments, because you want one that can see the Northern Hemisphere sky and one that can see the Southern Hemisphere sky."

I'd add that another motivation for building comparable instruments is to improve resolution by networking the telescopes and doing interferometry. But could interferometry networks be thought of as a sort of consolidation as well? For example, the Event Horizon Telescope that produced the famous M87 black hole image a few years ago consists of about a dozen individual telescopes that were all built independently by various groups, and now they're working together as one.

-GS