The better part of a decade ago— 2015-16 or thereabouts— I was on a panel discussing emerging technology for an audience with a lot of venture-capital types in it. This was in the era when people were still pitching projects using the phrase “Uber for ____” and having it taken seriously, and there was a lot of talk about “disrupting” this or that industry. The audience was also very Democratic-leaning—it would be more plausible to call them “neoliberal” than a lot of other groups now derided that way—so the discussion was mostly about how these tech-driven changes could be nudged in directions that would make lives better.

At one point, just to shake things up a little, I said something along the lines of “You know, if you actually want to change society for the better, you should stop looking at ways to ‘disrupt’ low-wage jobs, and invest in tech that would replace lawyers and doctors. It’s only when you start threatening the livelihood of people who have money and influence that we’ll get any meaningful change in policy.” That got some nervous laughter, but at the time there wasn’t really anything on tap that seemed particularly relevant, so the discussion moved on to other things.

I was reminded of this recently because I’ve been asked to comment on “AI” a lot recently, because anybody who knows the meaning of the phrase “linear algebra” is expected to have an opinion on the subject. And more specifically, Matt Yglesias posted a piece about self-driving taxi services this morning that reminded me of that exact exchange. (Spoiler: He’s maybe a hair more excited about the short-term potential of the tech than is justified, then spins it into a discussion of regulatory policies in majors cities. But you got both of those from the bit where I said “Matt Yglesias.”)

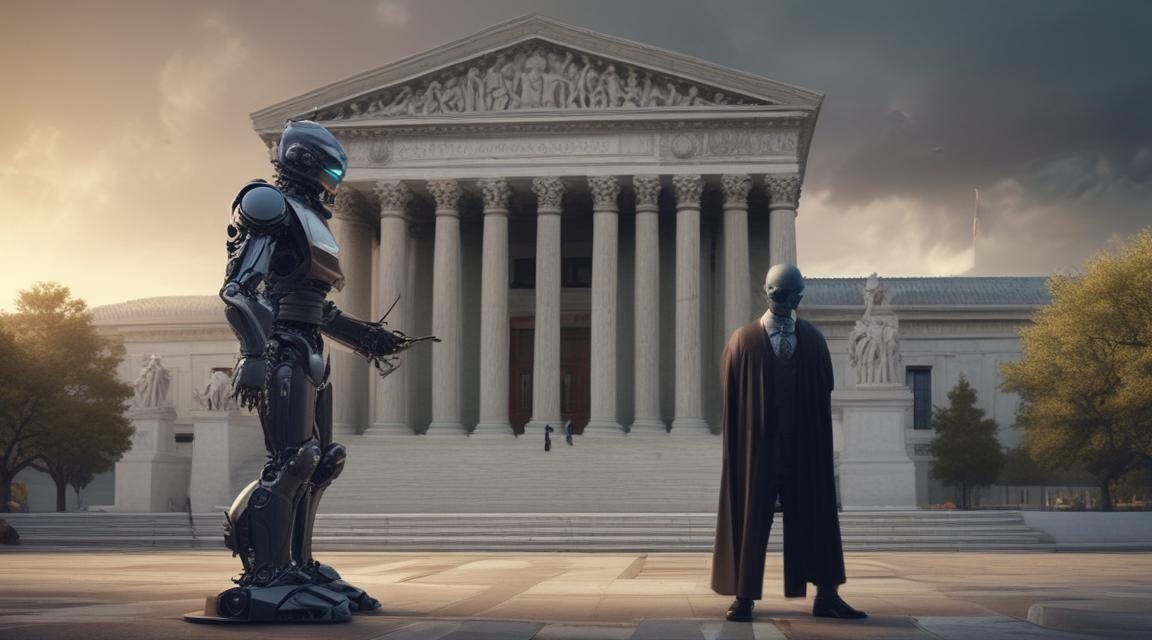

The combination of recently being asked to talk at some length about “AI” and Yglesias’s discussion of disrupting taxis led me to the thought that the current situation might plausibly be approaching the thing I flippantly asked for back in 2016-ish. That is, I’m not super convinced that “AI” as it’s currently constituted will be displacing blue-collar jobs fast enough and at a large enough scale to spur the policy-making class into action in a meaningful way. But the roiling cauldron of linear algebra that’s currently being sold as “artificial intelligence” might actually be positioned to “disrupt” a particular class of white-collar jobs in ways that might be interesting in the near term.

To be clear, I don’t think the tools we have now for generating images and text constitute “artificial intelligence” in a really meaningful sense, or are all that plausible as a step on the way to something that would. What we’ve developed are devices that are good at predicting the sorts of images and texts people are looking for when they ask other people to make an image or write a passage of text. They do this by referring to a vast corpus of pre-existing images and texts, and mixing and matching elements of what’s been done before to make something that’s novel but not really new (if that distinction makes sense). The best ones come close to reproducing their training data, but don’t really move beyond it.

The primary potential impact of this on the actual quest for artificial intelligence is seems most likely to be at a philosophical, definitional sort of level. The degree to which people are impressed by the output of these may shed a bit of light on how much of what we think of as core human behavior is just remixing existing stuff to generate something that plausibly meets the expectations of whoever asked for it. Which might prove useful in refining the definition of what “intelligence” actually means in the same way that past developments in the field have. I’m thinking here of a conversation I had some years back with a couple of colleagues about how many years back there was an assumption that it would take some massive breakthrough to make a computer that could beat humans at chess, but that vision would be straightforward. Decades on, chess has moved out of “Artificial Intelligence” into “Algorithm Design” and we’ve learned a ton about exactly how amazing it is that we can analyze visual data on the fly with our existing brains.

The other thing that’s revealed by the degree to which people are agitated about the current “AI” tools is that whether or not this constitutes actual “intelligence,” it’s a decent approximation of a lot of jobs. I’ve played around with these a bit as a way to distract myself from overinflated claims being made in meetings, and my impression is that even the free GPT models will get you something like 80% of the way to what you would get from a reasonably competent actual human asked to produce semi-formulaic text documents. It’s not hard to believe that refinements in the models that now exist could push that percentage close to 100%. You might well get over 90% by just framing the request better than what I can come up with while half-listening to someone else holding forth in a meeting.

That’s an interesting proposition because it means we’re plausibly 80% of the way to having computer tools that can do many of the sorts of “email jobs” currently held by recent college graduates. And that’s a group of people who(se parents) have enough money and cultural influence to get the policy-making class to take an interest in this. As demonstrated by the fact that I’ve been asked about this repeatedly, and expect to be in many more meetings in which this prospect is gravely discussed. It’s not difficult to imagine the technology expanding to the point where it can do many of the jobs currently held by recent graduates of law schools and medical schools, and then we’re really grabbing hold of the high-tension cables carrying power to the policy-making class.

The hard-to-answer question here is what policy should this drive, and the impossible-to-answer question is what policy will result (because if I’ve learned anything in four-plus decades of watching American politics, it’s that the relationship between those is tenuous at best). Given my personal political beliefs, what I would like to see here is a move toward decoupling “having a comfortable life” from “having a particular sort of white-collar job”: expanding public services and the like to let the off-loading of entry-level drudgery onto digital entities free young-ish people up to do more interesting and fulfilling things with their lives. I’m sort of temperamentally in favor of a Universal Basic Income sort of world, and a technology that takes a big bite out of entry-level white-collar drudgery could plausibly be a thing that starts to move us in that direction. A coalition of young people who feel entitled to a comfortable lifestyle and their affluent and influential parents who feel like they’ve already paid for their kids to live well could be the kind of thing to start toward meaningful change.

On the flip side, though, what we might get is ham-handed regulation attempting to prevent the use of artificial drudges so as to preserve the existing system, particularly in exclusionary-guild professions like law and medicine. I suspect that in the long term such a move would ultimately come to be seen as a futile rearguard action, but it could make a lot of things kind of suck in the short and medium term.

So, while I’m skeptical that what’s going on now is meaningful in terms of real “artificial intelligence” (let alone an existential threat to humanity), it might be enough to be politically meaningful, and that can have practical importance. I don’t really believe we’re imminently approaching the precipice of either Skynet or Iain Banks’s Culture, but we definitely are living in interesting times.

This is, I realize, not the kind of position that’s going to get me keynote-speaker invitations at tech conferences, but if you like it you might click this button:

And if you dislike it (or like it enough to be moved to public agreement), the comments will be open:

"technology that takes a big bite out of entry-level white-collar drudgery"

I think that entry level drudgery is valuable training to be a mid-level employee.

I agree with your sentiment toward UBI, but I think even better is a more generous EITC that reduces the urge to prevent white collar labor displacing technical change, but leave more incentive to do something ELSE. Not many people would opt for total indolence, but why crate an incentive?